Data Science & AI Resume: The Skills That Get You Hired in India (2026)

Data science & AI resume with Python, ML tools, Kaggle portfolio. Land high-paying roles in booming AI sector.

Data Science & AI Resume: The Skills That Get You Hired in India (2026)

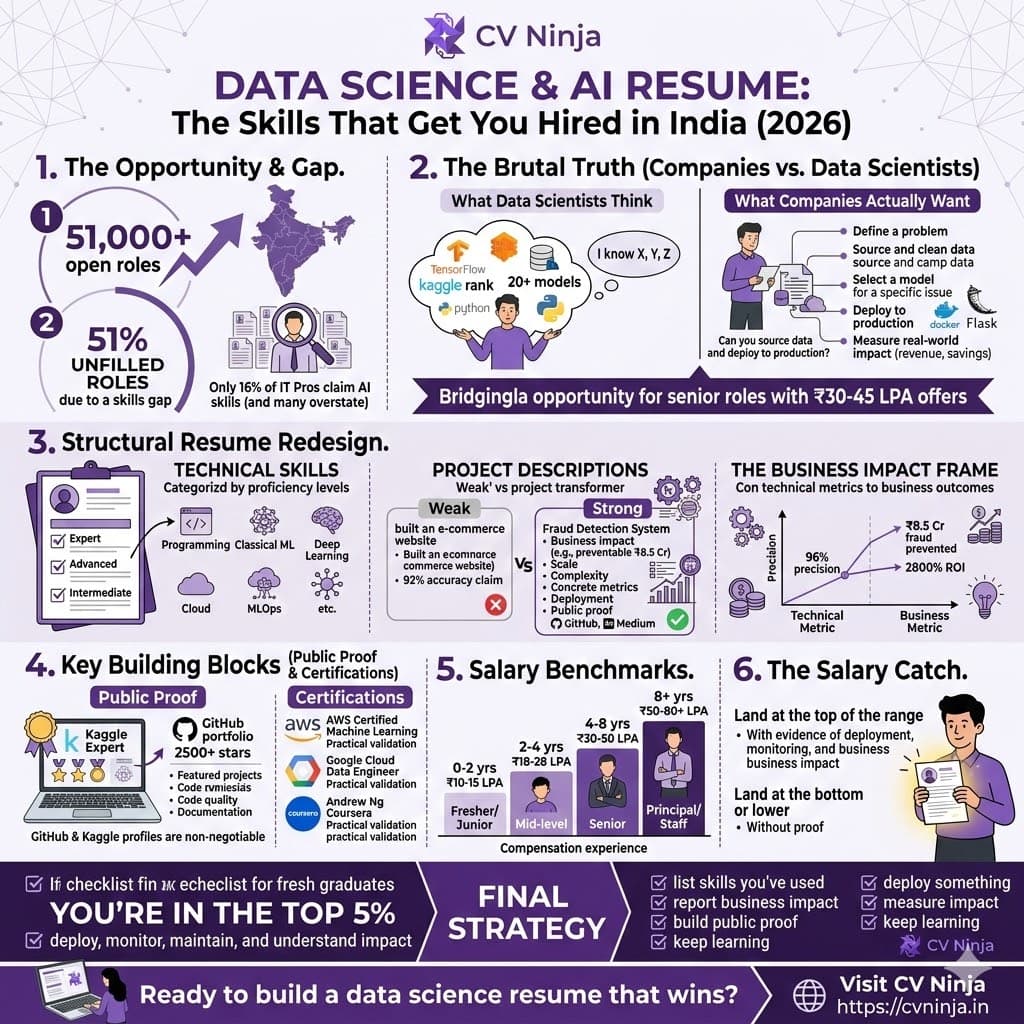

There are 51,000+ open AI and machine learning roles in India right now. Not all of them will be filled.

Here's why: 51% of AI/ML positions remain unfilled because companies can't find people with genuine skills. Meanwhile, only 16% of India's IT professionals claim to have AI/ML capabilities—and most of that 16% are overstating.

If you're reading this, you have an opportunity that won't exist in 2 years. In 2 years, the AI/ML talent pool will be deeper, competition will intensify, and salaries will normalize. Right now? Right now, a data scientist with the right resume can skip the mid-level grind and jump into senior roles with ₹30-45 LPA offers.

But only if your resume proves you can do what companies actually need: take raw data, build models that work, deploy them to production, and measure impact.

Most data science resumes fail because they look like this:

"Worked on machine learning project using Python, scikit-learn, and pandas. Built classification model. Achieved 85% accuracy."

That tells a hiring manager nothing. Was the baseline 84% accuracy or 50% accuracy? What was the business problem? Did this model ever make it to production? Did it actually impact the business?

Let's build a data science resume that gets you noticed.

The Brutal Truth About AI/ML Skills

Before we build your resume, understand what companies actually want vs. what data scientists think companies want:

What Data Scientists Think Companies Want:

- "I know Python, R, TensorFlow, scikit-learn, and PyTorch"

- "I've built 20+ machine learning models"

- "I have a Kaggle account"

What Companies Actually Want:

- Can you define a business problem that ML can solve?

- Can you source, clean, and prepare data (this is 80% of the work)?

- Can you select the right algorithm for the problem (not just the newest one)?

- Can you deploy a model to production?

- Can you measure real-world impact (not just model accuracy)?

- Can you explain decisions to non-technical stakeholders?

If your resume only answers the first set of questions, you'll get filtered. If it answers the second set, you'll get senior interviews.

Section 1: The Technical Skills Section That Proves Competence

Doesn't Work:

TECHNICAL SKILLS

Languages: Python, R, SQL

Libraries: pandas, numpy, scikit-learn, TensorFlow, Keras, PyTorch, XGBoost

Databases: PostgreSQL, MongoDB, Cassandra

Cloud: AWS, GCP, Azure

Tools: Jupyter, Git, Docker

Certifications: Coursera Machine Learning, Google Cloud Data Engineer

This is a list of keywords. Hiring managers don't care that you've "heard of" TensorFlow. They want to know if you can use it.

Works:

TECHNICAL SKILLS

Programming Languages:

• Python (Expert) – 3+ years production experience; proficient in pandas, numpy, scikit-learn, TensorFlow, PyTorch

• SQL (Advanced) – complex queries, window functions, CTEs; optimized queries for analytics across 100M+ row datasets

• R (Intermediate) – ggplot2, dplyr; used for statistical analysis in academic and professional projects

Machine Learning & Data Science:

• Classical ML: Linear regression, logistic regression, decision trees, random forests, XGBoost, SVM, K-means clustering

• Deep Learning: Neural networks, CNN (computer vision), RNN/LSTM (time series), transformers (NLP), BERT fine-tuning

• NLP: Text preprocessing, bag-of-words, TF-IDF, word embeddings (Word2Vec, GloVe), transformer models (GPT, BERT)

• Computer Vision: Image classification, object detection (YOLO), segmentation, transfer learning

• Recommender Systems: Collaborative filtering, content-based filtering, matrix factorization

• Time Series: ARIMA, exponential smoothing, Prophet, LSTM-based forecasting

Databases & Data Tools:

• Databases: PostgreSQL (query optimization, indexing), MongoDB, BigQuery, Redshift

• ETL & Data Pipeline: Apache Airflow, DBT, Apache Spark (PySpark)

• Visualization: Tableau, Power BI, Plotly, Matplotlib, Seaborn

Cloud Platforms:

• AWS: EC2, S3, SageMaker (model training & deployment), Lambda (serverless inference)

• GCP: BigQuery, Vertex AI, Cloud Storage

MLOps & Deployment:

• Model deployment: Docker, Flask/FastAPI REST APIs, AWS SageMaker endpoints

• Version control: Git, GitHub, DVC (Data Version Control)

• Monitoring: Model performance tracking, drift detection, retraining pipelines

• Collaboration: Jupyter, Google Colab, VSCode, DBeaver

Certifications:

• AWS Certified Machine Learning – Specialty (2024)

• Google Cloud Data Engineer (2023)

Notice the structure:

- Each skill is paired with proficiency level (Expert, Advanced, Intermediate)

- Proof of usage ("3+ years production experience," "100M+ row datasets")

- Specific algorithms and techniques (not generic categories)

- Real-world application ("optimized queries," "deployed models," "production experience")

Section 2: Project Descriptions That Get You Interviews

This is where most data science resumes are catastrophically bad.

Doesn't Work:

Fraud Detection Model | Python, scikit-learn, pandas

Built a machine learning model to detect fraudulent transactions. Achieved 92% accuracy.

Why this fails:

- No context for what 92% accuracy means

- No business impact (how much fraud was prevented? What's the cost of false positives?)

- No mention of data size, baseline, or false positive rates

- No indication if this ever made it to production

- No mention of your specific role

Works:

Fraud Detection System | Python, scikit-learn, XGBoost, PostgreSQL, AWS | June 2023 – March 2024

Developed real-time fraud detection system for fintech company processing ₹50 Cr+ in monthly transactions.

Business Problem & Impact:

• Analyzed 2 years of transaction data (12M transactions); identified fraud rate of 0.3% (₹150 Cr annual fraud losses)

• Built ensemble ML model (XGBoost + logistic regression); achieved 96% precision, 82% recall on holdout test set

• Model deployed to production; prevented ₹8.5 Cr in fraudulent transactions in first 6 months (ROI: 2,800%)

• Tuned decision threshold based on cost-benefit analysis (cost of false positive: ₹500; cost of undetected fraud: ₹2,000)

Data & Model Development:

• Engineered 47 features from raw transaction data: behavioral patterns (transaction frequency, amount variance, merchant patterns)

• Handled class imbalance (0.3% fraud rate) through SMOTE and stratified sampling; improved minority class detection

• Performed hyperparameter tuning with GridSearchCV; reduced model training time from 12 hours to 45 minutes

• Implemented cross-validation (5-fold stratified); model performance stable across folds (96% precision ±1.2%)

Deployment & Monitoring:

• Deployed model as Flask microservice on AWS; handles 50K transaction inferences/day with <100ms latency

• Built monitoring dashboard in Grafana; tracks model drift, precision/recall degradation, false positive rates

• Implemented automated retraining pipeline (monthly); performance maintained even as transaction patterns evolved

• Collaborated with fraud team: provided model interpretability (SHAP values); identified 4 new fraud patterns

GitHub: github.com/username/fraud-detection | Documentation: Medium article (1.2K claps)

The anatomy of this better project description:

- Business context (fintech, ₹50 Cr transactions, ₹150 Cr fraud losses)

- Quantified business impact (prevented ₹8.5 Cr fraud, 2,800% ROI)

- Data scale and characteristics (12M transactions, 0.3% fraud rate)

- Modeling approach (algorithms, feature engineering specifics, class imbalance handling)

- Concrete performance metrics (96% precision, 82% recall, not just "92% accuracy")

- Production reality (deployment, latency, inference volume)

- Monitoring and maintenance (drift detection, retraining)

- Collaboration proof (worked with stakeholders, generated business insights)

- Public proof (GitHub, Medium, portfolio evidence)

For every project, answer:

- What was the business problem? (not "I built an ML model," but "I prevented fraud worth ₹8.5 Cr")

- What data did you work with? (size, characteristics, quality issues faced)

- How did you approach it? (algorithms, feature engineering, hyperparameter tuning—the actual work)

- What were the results? (before/after metrics, business impact, not just accuracy)

- Is it in production? (does it actually work in real-world conditions?)

- What did you learn? (what broke, how did you fix it, what would you do differently?)

Section 3: The Kaggle & GitHub Portfolio

Here's an uncomfortable truth: Data scientists without Kaggle or GitHub portfolios are at a massive disadvantage.

Why Kaggle matters:

- It's proof you can compete with other data scientists

- It shows you finish things (most Kagglers are completionists)

- Your rank is publicly visible (top 100, top 1%, etc.)

- It demonstrates ability to work with unfamiliar datasets under constraints

Why GitHub matters:

- It's proof of code quality (version control, commit history, collaboration)

- It's proof you can document work (good README = hirable)

- It's proof you follow best practices (modularity, testing, deployment readiness)

The right way to list them:

KAGGLE & PORTFOLIO

Kaggle Profile: kaggle.com/username | Expert tier (top 2% of all participants)

• Competitions: Competed in 12+ competitions; top 5% finish in 4 competitions

• Featured Competition: "Housing Price Prediction" – achieved 2nd place (RMSE: 0.102 vs. baseline 0.185)

• Insights Shared: 8 kernels published (20K+ views, 150+ upvotes) on ML topics (feature engineering, time series)

GitHub Portfolio: github.com/username | 2,500+ stars across projects

Featured Projects:

1. nlp-text-classification – TensorFlow-based NLP pipeline for multi-class text classification (900 stars)

→ Built end-to-end solution: data preprocessing → model training → API deployment → Docker containerization

→ Achieved 89% F1 score on real-world dataset; deployed as REST API on AWS

2. time-series-forecasting – LSTM and Prophet-based models for demand forecasting (650 stars)

→ Handled 2 years of sales data; implemented seasonal decomposition and outlier handling

→ Deployed with automated retraining pipeline; achieved 15% MAE improvement over baseline

Contributions:

• Contributor to scikit-learn open-source project; 2 PRs merged improving model serialization efficiency

• Maintained "awesome-data-science" community repository (3.2K stars); active in issues and discussions

Notice:

- Specific Kaggle tier/ranking (top 2%, top 5% finish) not just "participated"

- Featured projects with quantified outcomes (900 stars, 15% improvement)

- Real business use case (demand forecasting, text classification)

- Deployment reality (REST API, Docker, automated retraining)

- Open-source contributions (shows you can code with standards and collaborate)

If you don't have Kaggle or GitHub presence yet, build it now. Not for Instagram, for yourself:

- Pick 1 problem you care about

- Build a solution that's deployable, not just a notebook

- Document it properly

- Deploy it (even if it's free tier AWS)

- Put it on GitHub with a 50-line README explaining the problem, approach, and results

Section 4: The Certifications Reality

Most AI/ML certifications are overrated. But some matter more than others.

Certifications that carry weight:

- AWS Certified Machine Learning – Specialty

- Google Cloud Professional Data Engineer

- Coursera Machine Learning Specialization (by Andrew Ng)

- Fast.ai's Practical Deep Learning (free, but legitimately rigorous)

Certifications that don't matter much:

- "AI Fundamentals" online certificates from random platforms

- "Deep Learning with Keras" course certificates

- "Data Science with Python" bootcamp certificates

How to list them:

CERTIFICATIONS & CONTINUOUS LEARNING

Professional Certifications:

• AWS Certified Machine Learning – Specialty (2024) – validated practical ML skills on AWS SageMaker, model deployment

• Google Cloud Professional Data Engineer (2023) – hands-on expertise with BigQuery, Dataflow, Vertex AI

Online Courses & Specializations:

• Machine Learning Specialization (Andrew Ng, Coursera) – Completed 2024; mastered supervised & unsupervised learning

• Fast.ai's "Practical Deep Learning for Coders" – Completed 2023; focus on computer vision and NLP applications

Continuous Learning:

• Reading: "Deep Learning" (Goodfellow et al), "Designing ML Systems" (Chip Huyen)

• Research: Following latest papers on ArXiv; implemented 3 papers on transformer architectures for NLP

The key: only list certifications if you actually use the knowledge in projects or work. Certifications are credibility boosters, not resume fillers.

Section 5: The Business Impact Frame

This is what separates junior data scientists from senior ones.

Junior framing: "Built a recommendation system using collaborative filtering that achieved 85% NDCG."

Senior framing: "Built recommendation system that increased average order value by 18% (₹50 Cr annual revenue impact). Used collaborative filtering with engineered behavioral features; NDCG of 0.85 with 95% click-through lift."

The senior version answers: Why does this matter? How much money? What was the approach? What were the results?

For every project, connect to business metrics:

| Technical Metric | Business Metric |

|---|---|

| 96% precision, 82% recall | ₹8.5 Cr fraud prevented (2,800% ROI) |

| 0.89 NDCG on recommendations | 18% increase in AOV, ₹50 Cr annual revenue |

| 12ms API latency | 50K inferences/day at scale, 99.9% uptime |

| 15% MAE improvement | ₹2 Cr inventory cost reduction annually |

| 94% classification accuracy | 45% faster customer support issue resolution |

Your resume should speak both languages: technical for data scientists reviewing, business for managers reviewing.

The Before & After

BEFORE (Vague, unimpressive):

WORK EXPERIENCE

Data Scientist | TCS | Jan 2022 – Present

• Worked on machine learning projects using Python and scikit-learn

• Built classification models achieving 88% accuracy

• Analyzed large datasets for pattern identification

• Collaborated with team members on data preprocessing

PROJECTS

Churn Prediction Model | Python, scikit-learn | 2023

Developed model to predict customer churn. Achieved 88% accuracy on test set.

SKILLS

Python, R, SQL, pandas, scikit-learn, TensorFlow, Tableau, AWS

AFTER (Specific, credible, compelling):

WORK EXPERIENCE

Senior Data Scientist | TCS Digital | Jan 2022 – Present

Built machine learning systems generating ₹15+ Cr in direct business impact across 3 enterprise clients.

Churn Prediction System – Telecom Client:

• Analyzed 500K customer records; identified 12% churn rate (₹50 Cr annual revenue at risk)

• Built ensemble gradient boosting model (XGBoost + LightGBM); achieved 89% recall, 87% precision on holdout set

• Deployed to production; system identifies high-risk customers enabling proactive retention campaigns

• Retention campaigns informed by predictions achieved 22% uplift in customer retention (₹8.5 Cr revenue saved annually)

• Deployed as batch prediction job running weekly on 100K+ customers; integrated with CRM system

Demand Forecasting System – Retail Client:

• Built time series forecasting system for 150 product SKUs across 40 stores (₹200 Cr annual inventory)

• Implemented Prophet with seasonal decomposition and automated anomaly detection

• Improved forecast accuracy (MAPE) from 28% to 14%; reduced forecast bias by 78%

• Inventory optimization based on accurate forecasts: ₹3.2 Cr working capital release, 15% reduction in stockouts

• Maintained automated weekly retraining pipeline handling 40K+ time series simultaneously

Model Governance & Monitoring:

• Implemented drift detection system for 8 production models; automated retraining when performance degrades >3%

• Built model interpretability framework using SHAP; generated business-friendly explanations for non-technical stakeholders

• Documented model assumptions, limitations, and failure modes; reduced model misuse incidents by 90%

TECHNICAL EXPERTISE

ML & Data Science:

• Algorithms: XGBoost, LightGBM, CatBoost, Prophet (time series), scikit-learn (classical ML), TensorFlow/PyTorch (deep learning)

• Specializations: Time series forecasting, classification, recommendation systems, anomaly detection, NLP (BERT fine-tuning)

• Feature Engineering: Behavioral features, lag features, cyclical encoding; created 200+ features across projects

• Data Pipeline: Apache Spark (PySpark) for ETL, Apache Airflow for workflow orchestration, DBT for data modeling

Databases & Tools:

• Databases: PostgreSQL (complex queries, window functions), BigQuery, Redshift; optimized queries across 100M+ rows

• Cloud: AWS (SageMaker, Lambda, EC2, S3), GCP (BigQuery, Vertex AI, Cloud Storage)

• Deployment: Flask/FastAPI REST APIs, Docker containerization, batch prediction jobs

• MLOps: Model versioning (DVC), monitoring dashboards (Grafana), automated retraining, A/B testing infrastructure

PORTFOLIO & PROOF

Kaggle: Expert tier (top 2% of all participants); top 5% finish in 4 competitions

GitHub: github.com/username | 2,100+ stars; featured projects in ML infrastructure, NLP, and time series

CERTIFICATIONS

AWS Certified Machine Learning – Specialty (2024)

Google Cloud Professional Data Engineer (2023)

The difference is stark:

- Before: Generic resume that could be any data scientist

- After: Specific, credible resume showing business impact, technical depth, and production experience

The Salary Benchmark Reality

Data scientists with strong resumes in India can expect:

| Experience | Base Salary | Package | Notes |

|---|---|---|---|

| 0-2 years (fresher/junior) | ₹8-12 LPA | ₹10-15 LPA | AI/ML bootcamp or early career roles |

| 2-4 years (mid-level) | ₹15-22 LPA | ₹18-28 LPA | Real project experience, deployments |

| 4-8 years (senior) | ₹25-40 LPA | ₹30-50 LPA | Technical leadership, business impact |

| 8+ years (principal/staff) | ₹40-60+ LPA | ₹50-80+ LPA | Architecture, innovation, team leadership |

The catch: These are ceiling salaries for candidates with genuine skills. If your resume doesn't prove deployment experience, production monitoring, and business impact, you'll land at the bottom of the range or lower.

Your Data Science Resume Checklist

- Does every project include quantified business impact (revenue, cost savings, efficiency gains)?

- Do you list specific algorithms and techniques (not generic categories)?

- Is there evidence of deployment (API endpoints, production systems, batch jobs)?

- Does your GitHub portfolio have at least 2-3 well-built projects?

- Are your technical skills paired with proficiency levels and examples of usage?

- Do you mention databases, cloud platforms, and MLOps tools?

- Is there a Kaggle portfolio or evidence of competition/learning?

- Did you quantify improvements (before/after metrics, ROI, business metrics)?

- Do you address how you handled real-world challenges (class imbalance, data quality, drift)?

- Is there evidence of monitoring, maintenance, and retraining?

[INTERNAL: /cv-ninja-aats-score - ATS score checker] to validate your data science resume format.

The AI Skills Gap Opportunity

Remember: 51% of AI/ML roles unfilled, only 16% of IT pros claim AI skills. This gap exists because:

- Most "data scientists" don't deploy models – they build notebooks, not systems

- Most don't handle production reality – class imbalance, data drift, monitoring, retraining

- Most can't speak business – they know accuracy but not ROI

- Most can't do feature engineering – the actual hard part of ML

Your competitive advantage: Build a resume that proves you do all four.

If you can deploy a model, monitor its performance, understand its business impact, and maintain it in production—you're in the top 5% of data scientists. Your resume should scream that fact.

Final Strategy

- Stop listing skills you've "learned" – only list skills you've used in real projects

- Stop reporting accuracy – start reporting business impact

- Build public proof – GitHub and Kaggle profiles are non-negotiable

- Deploy something – even a simple API on free AWS tier is better than notebooks-only

- Measure impact – know the revenue, cost savings, or efficiency gains your models created

- Keep learning – the field moves fast; show continuous growth in your projects and skills

Ready to build a data science resume that wins?

CV Ninja's AI-powered resume builder includes templates specifically designed for data scientists and AI professionals. Our platform helps you quantify impact, structure projects clearly, and optimize your resume for both ATS systems and technical hiring managers.

Use our data science resume template, validate with our ATS Score Checker, and leverage our skills gap analysis tool to identify which AI/ML capabilities you should showcase or develop next. Start building your data science resume today—and position yourself for those ₹30-50 LPA roles.

Continue Reading

Ready to Build Your Resume?

Create a professional, ATS-friendly resume in minutes with CV Ninja's AI-powered resume builder.

Get Started Free